To Design a Better Future, Embrace the Uncomfortable

So much of being a designer is being willing to embrace the uncomfortable. We create new products, experiences and systems for people whose needs are different than ours—sometimes really different.

While we designed a new family of acoustic amps for Fender, we squeezed into an Airstream for two months to better understand the touring musician lifestyle. And when we wanted to better understand the environmental impact of the things we design, we visited a sewage treatment plant to see what happens when a product can't be recycled.

We go after big problems that have never been solved. And by going all in on the unfamiliar and uncomfortable, we’ve come up with creative solutions that we might not have imagined before.

As an industrial designer, I’m a builder. I experience the world with all my senses and apply my tangible building skills to make experiences and systems. Technology inspires me because it's always been an important vehicle to advance our current state. With technology racing forward at an ever increasing pace, some advancements can impact us on an unprecedented scale. As shapers of the future, we must strive to understand the consequences and implications of emerging technology. We do this by asking ourselves uncomfortable questions about possible outcomes during the design process in areas like healthcare, the future of work, and the circular economy.

In order to better understand how emerging technologies could affect our day-to-day life, my colleagues from multiple design disciplines—industrial design, data science, communications, interaction design—and I embarked on an internal IDEO project that could give us some clues. We got curious and asked ourselves, How might the way we’re communicating with each other change with new technology? How might we collaborate together in the future when we can show up in different realities? What could be possible if the digital and physical world blend seamlessly into each other? How will we protect our privacy if we can teach devices our most personal preferences, and what will happen to our data?

We named our project The Discomfort Zone.

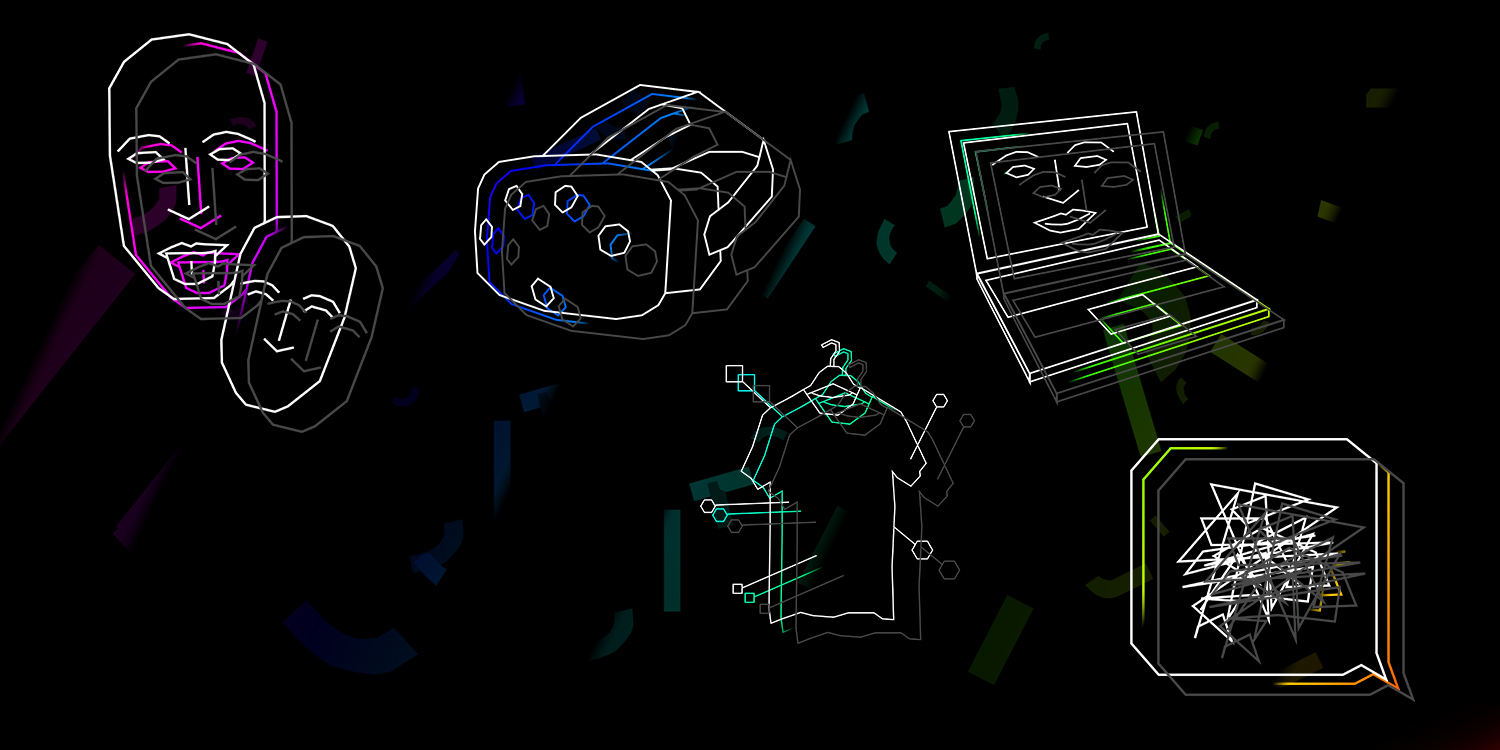

In order to explore these uncomfortable, out-there questions, we had to experience them ourselves, and to experience the future we had to build it. Here are the tools that we built:

Personalized Intelligence: by building a camera that can be taught to take the best self portraits based on individualized feedback, we experienced the idea of actively teaching machines our preferences. The user trains it on their ideal lighting, placement, composition, head tilt, and facial expressions. While it may seem like a trivial thing to create, teaching machines to see us how we see ourselves can show us the path to building other devices—like health devices—that the user can teach to adapt to user preferences and feedback.

Complementary Media: a device that can be taught to recognize facial expressions and emotions, then generates an appropriate, customized emotional expression for each user—like a personalized emoji—helped us imagine more depth and expressiveness online using artificial intelligence. This tool can communicate real human emotion, which could lead to improved communication among children, for example.

Shared Realities: a tool, bridging the physical and virtual, that allows workers operating in VR to sense the presence and movements of colleagues around them as if they are on the same plane. An exploration of how virtual and augmented technology might improve collaboration, it allows people to respond to virtual coworkers’ cues without fully disrupting work in the virtual environment.

Network Empathy: code that enables a user to anticipate how large social or interconnected user networks will react to messages before they are posted, bringing deeper understanding and empathy to online communication. With cues from large online networks, smart technology becomes even smarter and empathetic interaction online becomes easier—especially when it is applied to high-profile communications, or at mass scale among networks that might be discussing politics, health, or education.

Meta Identity: code that is invisible to naked eye can be embedded in an object to make it readable by a machine—not unlike a barcode—and give it meaning online. Branding and production methods might evolve toward this physical tagging method, so that users easily learn about physical objects they see, and find them online. Making the physical as searchable as the Internet could further empower consumers to align their purchase decisions with their beliefs and values.

As designers, it is our responsibility to explore the discomfort zone, design holistically, and consider the full range of possibilities of what we create. For example, as much as Network Empathy was intended to be an inclusive tool, it could also be used to manipulate public opinion by predicting the reaction of large audiences. If we use Personalized Intelligence to teach our devices our most personal preferences, what becomes of this new personal data? And who is the owner of this information: the device, the platform, or the individual?

Because technology will serve us on an even more personal level, the stakes are high. Designers are uniquely stationed to be the connectors in an ever more complex ecosystem of stakeholders, and we must ensure the tool leaves the lab and enters an ecosystem with safeguards in place to have a positive impact.

We’re at the dawn of something big and unknown. New technologies are shaping the future in ways we haven’t even thought of yet. Some of them will have extraordinary benefits, while others, through unforeseen capabilities or even sinister intentions, may have unwanted consequences. But as designers, it’s up to us to explore them early, dive into the unfamiliar, and do what we can to shape the future for the better. We’d love for you to join us here in The Discomfort Zone.

The Discomfort Zone was a collaboration among many designers at IDEO. Special thanks to all involved, including the core design team: Leo Marzolf, Jochen Weber, Brooke Thyng, Christian Ramsey, and Nan Tsai.

Words and art

Subscribe

.svg)